PPT-Kernel Stack Desirable for security:

Author : enteringmalboro | Published Date : 2020-08-28

eg illegal parameters might be supplied Whats on the kernel stack Upon entering kernelmode tasks registers are saved on kernel stack eg address of tasks usermode

Presentation Embed Code

Download Presentation

Download Presentation The PPT/PDF document "Kernel Stack Desirable for security:" is the property of its rightful owner. Permission is granted to download and print the materials on this website for personal, non-commercial use only, and to display it on your personal computer provided you do not modify the materials and that you retain all copyright notices contained in the materials. By downloading content from our website, you accept the terms of this agreement.

Kernel Stack Desirable for security:: Transcript

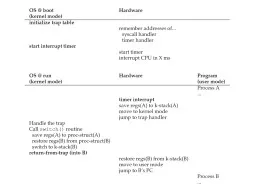

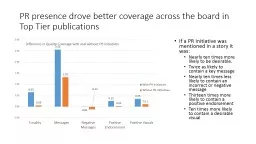

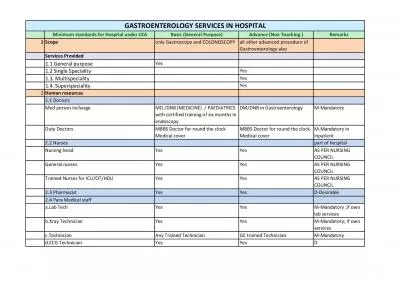

eg illegal parameters might be supplied Whats on the kernel stack Upon entering kernelmode tasks registers are saved on kernel stack eg address of tasks usermode stack. Motivation. Operating systems (and application programs) often need to be able to handle multiple things happening at the same time. Process execution, interrupts, background tasks, system maintenance . EXPERIENCE OF REGIONAL POLICY AND SEARCHING FOR INNOVATIVE TRENDS. VIKTORIJA ŠIPILOVA. sipilova.viktorija@inbox.lv . The . Institute of Social Research. ,. . Daugavpils University (LATVIA). EDULEARN 2014. If a PR Initiative was mentioned in a story it was:. Nearly ten times more likely to be desirable.. Twice as likely to contain a key message. Nearly ten times less likely to contain an incorrect or negative message. with Multiple Labels. Lei Tang. , . Jianhui. Chen and . Jieping. Ye. Kernel-based Methods. Kernel-based methods . Support Vector Machine (SVM). Kernel Linear Discriminate Analysis (KLDA). Demonstrate success in various domains. . Dr. M. . Asaduzzaman. . Professor. Department of Mathematics . University . of . Rajshahi. Rajshahi. -6205, Bangladesh. E-mail: md_asaduzzaman@hotmail.com. Definition. Let . H. be a Hilbert space comprising of complex valued . Mode, space, and context: the basics. Jeff Chase. Duke University. 64 bytes: 3 ways. p + 0x0. 0x1f. 0x0. 0x1f. 0x1f. 0x0. char p[]. char *p. int p[]. int* p. p. char* p[]. char** p. Pointers (addresses) are 8 bytes on a 64-bit machine.. Debugging as Engineering. Much of your time in this course will be spent debugging. In industry, 50% of software dev is debugging. Even more for kernel development. How do you reduce time spent debugging?. KAIST . CySec. Lab. 1. Contents. About Rootkit. Concept and Methods. Examples. Ubuntu Linux (Network Hiding. ). Windows 7 (File Hiding). Android Rootkit Demonstration (DNS Spoofing). Exercise (Rootkit Detection). 2/2/17. Context Switches. Context Switching. A context switch between two user-level threads does not involve the kernel. In fact, the kernel isn’t even aware of the existence of the threads!. The user-level code must save/restore register state, swap stack pointers, etc.. Machine Learning. March 25, 2010. Last Time. Basics of the Support Vector Machines. Review: Max . Margin. How can we pick which is best?. Maximize the size of the margin.. 3. Are these really . “equally valid”?. Machine Learning. March 25, 2010. Last Time. Recap of . the Support Vector Machines. Kernel Methods. Points that are . not. linearly separable in 2 dimension, might be linearly separable in 3. . Kernel Methods. Object Recognition. Murad Megjhani. MATH : 6397. 1. Agenda. Sparse Coding. Dictionary Learning. Problem Formulation (Kernel). Results and Discussions. 2. Motivation. Given a 16x16(or . nxn. ) image . Basic (General Purpose) Advance (Non Teaching ) Remarks 1 Scope only Gastroscopy and COLONOSCOPY all other advanced procedure of Gastroenterology also Services Provided 1.1 General purpose Yes 1.2 S CS 161: Lecture 3. 2/1/2021. Multitasking. A user-level execution context consists of:. A virtual address space (pointed to by . %cr3. ). The next instruction to execute (pointed to by . %rip. ). A stack (pointed to by .

Download Document

Here is the link to download the presentation.

"Kernel Stack Desirable for security:"The content belongs to its owner. You may download and print it for personal use, without modification, and keep all copyright notices. By downloading, you agree to these terms.

Related Documents