PPT-CS33: Introduction to Computer Organization

Author : khadtale | Published Date : 2020-06-23

Week 8 Discussion Section Atefeh Sohrabizadeh atefehszcsuclaedu 112219 Agenda Virtual Memory Threading and Basic Synchronization Virtual Memory As demand on

Presentation Embed Code

Download Presentation

Download Presentation The PPT/PDF document "CS33: Introduction to Computer Organizat..." is the property of its rightful owner. Permission is granted to download and print the materials on this website for personal, non-commercial use only, and to display it on your personal computer provided you do not modify the materials and that you retain all copyright notices contained in the materials. By downloading content from our website, you accept the terms of this agreement.

CS33: Introduction to Computer Organization: Transcript

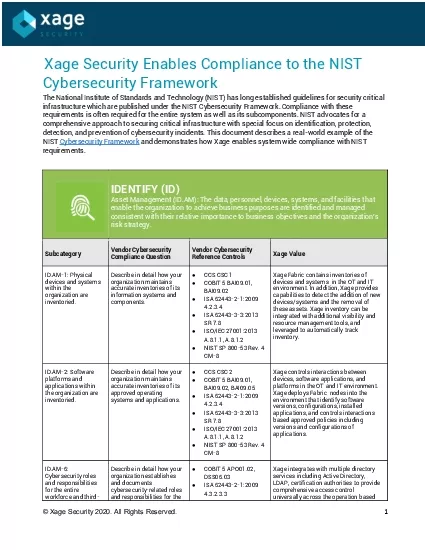

Week 8 Discussion Section Atefeh Sohrabizadeh atefehszcsuclaedu 112219 Agenda Virtual Memory Threading and Basic Synchronization Virtual Memory As demand on the CPU increases processes slow down. Welcome! You’ve come to the right place for all of your computer and telecommunication support needs. Computer Center Santa Cruz is a full-scale technology resource center with top computer repair experts and owned by Jesse Wilkins of Wilkins Consulting. At Computer Center Santa Cruz, your technology demands matter here whether you are an individual, start-up business or established corporation. You will find the urgent computer repair help you need, answers to your nagging technology questions, and a whole lot more. M.Chinyuku. What is a Computer?. . A computer is an electronic device that stores, accepts data, retrieves data, processes data and gives output according to a set of instructions.. . 2. Elements of a computer. CSE 113. Gaurav. Kumar. CSE 113 – Introduction to. Computer Programming I. Instructor: Gaurav Kumar. Office. : . 113V Davis Hall. Email. : . gauravku@buffalo.edu. Email . you send me should be from . Staffing. Organizing. Controlling. Directing. Functions of Management. Introduction. Look at five people working in Perseus Inc. as described below.. Gavin Smith. Rachel Blake. Leo . Cooper. Gloria . . Zhuravlev. Alexander 326 MSLU. COMPUTER-RELATED CRIME. Computer crimes refer to the use of information technology for illegal purposes or for unauthorized access of a computer system where the intent is to damage, delete or alter the data present in the computer. Even identity thefts, misusing devices or electronic frauds are considered to be computer crimes.. Software: . computer . instructions or. data. Anything that can be stored electronically is software, in contrast to storage devices and display devices which are called hardware.. Example: . Computer Organization and Architecture. © 2014 . Cengage. Learning Engineering. All Rights Reserved. . 1. Computer Organization and Architecture: Themes and Variations, 1. st. Edition Clements. © 2014 Cengage Learning Engineering. All Rights Reserved. . Computer Organization & Architecture. Lecture Duration: 2 Hours. Lecture Overview. Course description. Chapter 1 : Introduction. Overview of computer organization and . architecture. The main components of a . Is Knowledge Organization = Information Organization? Paper presented at the 12th International ISKO Conference Mysore , India, 6-9 August 2012. ( Tuesday , 07th August 2012 10.00-10.30 AM, Track access management with Single Sign-on SSO and Multi-Factor Authentication MFA universally across the operation with full visibility for auditability With Xage organizations can unify identity and acce S201David GoldschmidtEmail goldschmidtgmailcomOffice Amos Eaton 115Office hours Mon 930-1100AMTue 1100AM-1230PMThu 200-300PMKonstantin KuzminEmail kuzmik2rpieduOffice Amos Eaton 112Office hours TBDGra Addressing Modes. Department of Computer Science, Faculty of Science, Chiang Mai University. Outline. One-Dimensional Arrays. Addressing Modes. Two-Dimensional Arrays. Based Indexed Addressing Mode. 204231: Computer Organization and Architecture. The FLAGS Register. Department of Computer Science, Faculty of Science, Chiang Mai University. Outline. The FLAGS Register. Overflow. How Instruction Affect the Flags. 204231: Computer Organization and Architecture. Computer Lab Rules. Report to class on time. If you are not in class when the bell rings. , report . to counselor for pass/detention. Computer Lab Rules. Stay in assigned seats. You are accountable for the computer which is assigned to you.

Download Document

Here is the link to download the presentation.

"CS33: Introduction to Computer Organization"The content belongs to its owner. You may download and print it for personal use, without modification, and keep all copyright notices. By downloading, you agree to these terms.

Related Documents