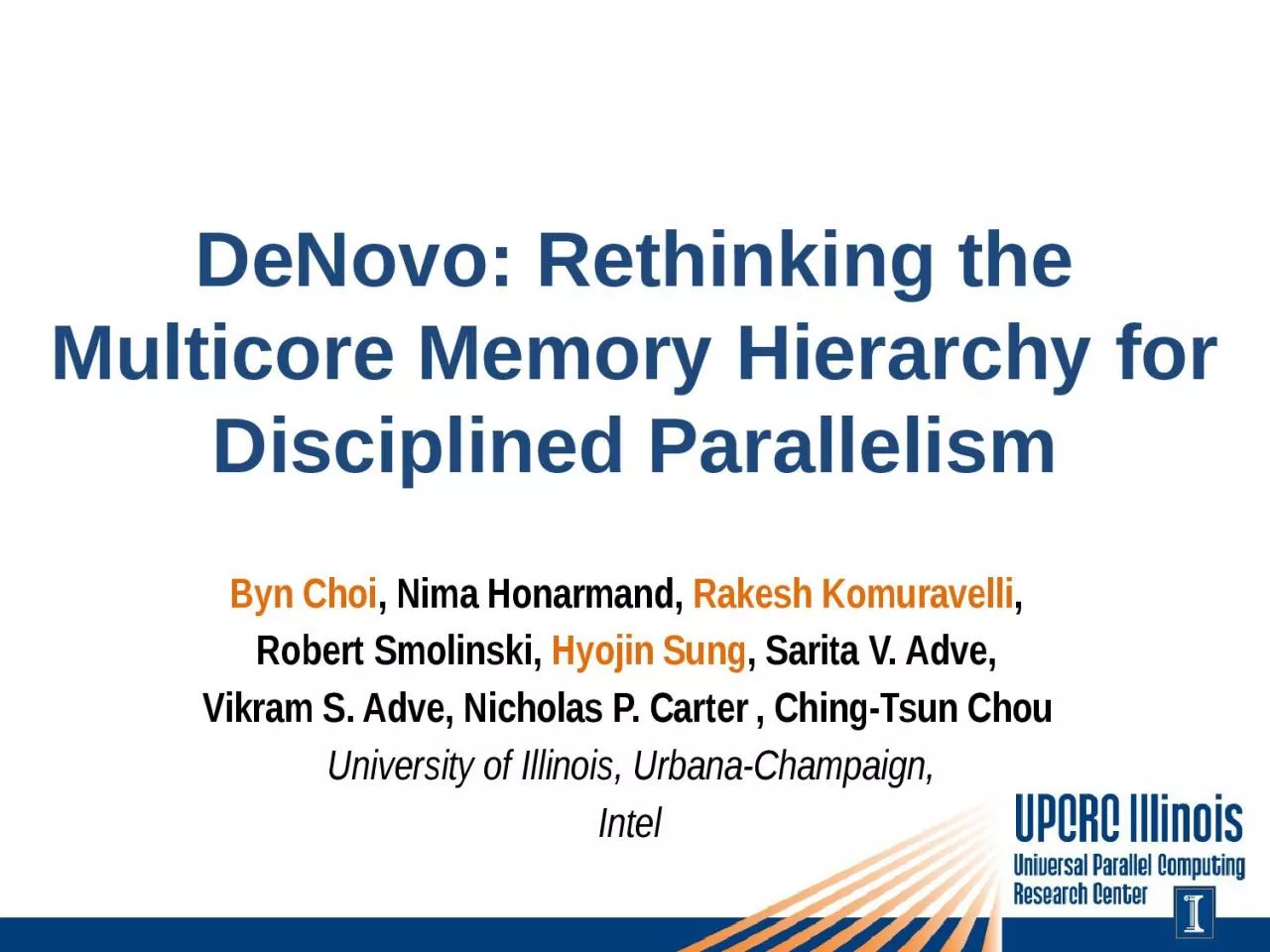

PPT-DeNovo : Rethinking the

Author : scarlett | Published Date : 2022-06-15

Multicore Memory Hierarchy for Disciplined Parallelism Byn Choi Nima Honarmand Rakesh Komuravelli Robert Smolinski Hyojin Sung Sarita V Adve Vikram

Presentation Embed Code

Download Presentation

Download Presentation The PPT/PDF document "DeNovo : Rethinking the" is the property of its rightful owner. Permission is granted to download and print the materials on this website for personal, non-commercial use only, and to display it on your personal computer provided you do not modify the materials and that you retain all copyright notices contained in the materials. By downloading content from our website, you accept the terms of this agreement.

DeNovo : Rethinking the: Transcript

Multicore Memory Hierarchy for Disciplined Parallelism Byn Choi Nima Honarmand Rakesh Komuravelli Robert Smolinski Hyojin Sung Sarita V Adve Vikram S . Denovo genome Denovo genome outline outline novogenome from contigs from assembled contigs annotation Denovo genome Denovo genome Reads contig Gene Gene Annotation Gene Annotation Forgene Misconceptions an. d Rethinking. By: Kenneth Solis. What is Affirmative Action?. Policies designe. d. to reme. dy the underrepresentation of minority groups in . the work place. (Harris & . Narayan. †. : Rethinking Hardware for Disciplined Parallelism. Byn Choi, Rakesh Komuravelli, Hyojin Sung, . Rob Bocchino, Sarita Adve, Vikram Adve. Other collaborators:. Languages: Adam Welc, Tatiana Shpeisman, Yang Ni (Intel). nucleotides is essential for . life . processes. nucleotides . are the . activated precursors . of nucleic . acids. As such, they are necessary for the replication of the genome . an . adenine nucleotide, ATP, is . genome assembly . and analysis. outline. De novo genome assembly. Gene finding from assembled . contigs. Gene annotation. Denovo. genome assembly. 3. Genome . contig. Reads. Gene finding. To find out coding region on genome sequence. Attitudes. Matthew . 7:7-12. Pursue. . God . intently. .. Begin . with . asking. .. Continue . seeking. .. Follow . up with . perseverance. .. Rethinking . Attitudes. Pursue. God . intently. .. Ask. in . Early . Literacy Instruction . Rick Chan Frey. University of California, Berkeley. rick@mustardseedbooks.org. . Rethinking the Role of Decodable Texts. My focus: what kind of texts work best to help students learn to read—hard to study. †. : Rethinking Hardware for Disciplined Parallelism. Byn Choi, Rakesh Komuravelli, Hyojin Sung, . Rob Bocchino, Sarita Adve, Vikram Adve. Other collaborators:. Languages: Adam Welc, Tatiana Shpeisman, Yang Ni (Intel). Hardware Cache Coherence and Some Implications. Rakesh Komuravelli. Sarita. . Adve. , . Ching-Tsun. Chou. University of Illinois @ Urbana-Champaign, Intel. denovo@c. s.illinois.edu. Motivation. Today’s shared memory systems are more complex than ever. Genome Assembly Team. Kelley Bullard, Henry Dewhurst, . Kizee. Etienne, . Esha. Jain, . VivekSagar. KR, Benjamin Metcalf, . Raghav. Sharma, Charles . Wigington. , Juliette . Zerick. 454 raw reads. Rethinking . of . the Memory Hierarchy. Sarita. Adve, . Vikram Adve,. Rob Bocchino. , Nicholas Carter, Byn . Choi, . Ching-Tsun. Chou, . Stephen Heumann, . Nima. Honarmand, Rakesh Komuravelli, Maria Kotsifakou, . A Hardware Architect’s Perspective. Sarita. Adve, . Vikram Adve,. Rob Bocchino. , Nicholas Carter, Byn . Choi, . Ching-Tsun. Chou, . Stephen Heumann, . Nima. Honarmand, Rakesh Komuravelli, Maria Kotsifakou, . life . processes. nucleotides . are the . activated precursors . of nucleic . acids. As such, they are necessary for the replication of the genome . an . adenine nucleotide, ATP, is . the universal currency of energy. A guanine . WELCOME. CHEMICAL AND BIOLOGICAL TREATMENTS. Chemical and Biological Treatments. Chemical oxidation. Chemical reduction. Biological treatment. Many technologies can be applied “in situ” or “ex situ”..

Download Document

Here is the link to download the presentation.

"DeNovo : Rethinking the"The content belongs to its owner. You may download and print it for personal use, without modification, and keep all copyright notices. By downloading, you agree to these terms.

Related Documents