PPT-Post hoc tests

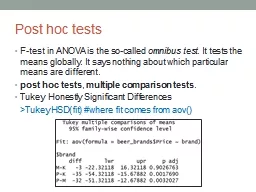

Ftest in ANOVA is the socalled omnibus test It tests the means globally It says nothing about which particular means are different post hoc tests multiple comparison

Download Presentation

"Post hoc tests" is the property of its rightful owner. Permission is granted to download and print materials on this website for personal, non-commercial use only, provided you retain all copyright notices. By downloading content from our website, you accept the terms of this agreement.

Presentation Transcript

Transcript not available.