PPT-Mar k

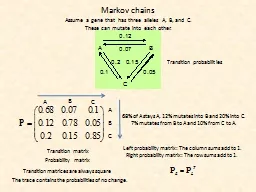

ov chains Assume a gene that has three alleles A B and C These can mutate into each other Transition probabilities Transition matrix Probability matrix Left probability

Download Presentation

"Mar k" is the property of its rightful owner. Permission is granted to download and print materials on this website for personal, non-commercial use only, provided you retain all copyright notices. By downloading content from our website, you accept the terms of this agreement.

Presentation Transcript

Transcript not available.