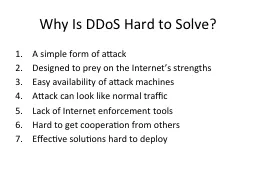

PPT-Why Is DDoS Hard to Solve?

Author : alida-meadow | Published Date : 2016-03-11

A simple form of attack Designed to prey on the Internets strengths Easy availability of attack machines Attack can look like normal traffic Lack of Internet enforcement

Presentation Embed Code

Download Presentation

Download Presentation The PPT/PDF document "Why Is DDoS Hard to Solve?" is the property of its rightful owner. Permission is granted to download and print the materials on this website for personal, non-commercial use only, and to display it on your personal computer provided you do not modify the materials and that you retain all copyright notices contained in the materials. By downloading content from our website, you accept the terms of this agreement.

Why Is DDoS Hard to Solve?: Transcript

Download Rules Of Document

"Why Is DDoS Hard to Solve?"The content belongs to its owner. You may download and print it for personal use, without modification, and keep all copyright notices. By downloading, you agree to these terms.

Related Documents