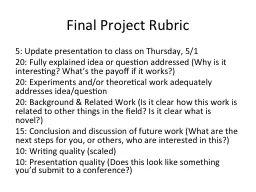

PPT-Final Project Rubric

5 Update presentation to class on Thursday 51 20 Fully explained idea or question addressed Why is it interesting Whats the payoff if it works 20 Experiments andor

Download Presentation

"Final Project Rubric" is the property of its rightful owner. Permission is granted to download and print materials on this website for personal, non-commercial use only, provided you retain all copyright notices. By downloading content from our website, you accept the terms of this agreement.

Presentation Transcript

Transcript not available.