PPT-Von Neuman

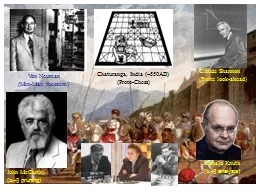

MinMax theorem Claude Shannon finite lookahead Chaturanga India 550AD ProtoChess John McCarthy ab pruning Donald Knuth ab analysis Wilmer McLean The war began in

Download Presentation

"Von Neuman" is the property of its rightful owner. Permission is granted to download and print materials on this website for personal, non-commercial use only, provided you retain all copyright notices. By downloading content from our website, you accept the terms of this agreement.

Presentation Transcript

Transcript not available.